Adaptive block size to improve the security budget

I’ve been discussing the idea of adaptive block sizes on X and in private chats, so I thought I would post it here to make it a little bit more formal and visible, and potentially gather some additional feedback. It is not a fully finished thing, just my current state of thinking about the subject.

Motivation

Kaspa’s fee market has great theoretical properties, thanks to the DAG parallelism. It however struggles to activate in practice, since the network has a far greater capacity than the current economic demand for transactions.

While there is no fixed number for when a security budget is deemed “enough”, the current low fees coupled with a very fast emission schedule are worrying many people inside and outside the Kaspa community, which is itself a factor that plays against adoption.

Most development efforts focus on increasing the demand by adding additional functionalities and use cases, and that’s of course great.

However I think that a functional fee market must also have an elastic supply, in order to guarantee sustained miners’ revenues across multiple demand regimes, to avoid starvation when demand is low and congestion if it ever becomes too high.

This text describes a way to do so that is sufficiently simple and conservative as to avoid excessive shocks in the way Kaspa works, but also ambitious enough to potentially alleviate many worries about its future sustainability.

The proposal

I simply propose to divide time in capacity epochs, and have miners regularly vote (by hash power) on the block size limit to be used for the following epoch. For example, capacity epochs could last two weeks and the voting window could be of 3 days. A commit-then-reveal voting scheme could also be used, although I’m not sure if it’s strictly necessary.

Miners would vote on the delta (in Kb) to be added or subtracted from the current block size limit, by including that number into a new dedicated field of the blocks they mine. The protocol would then compute an X percentile of the submitted deltas, and clamp the resulting delta to a bounded range (for example [-Δmax, +Δmax]) to avoid excessive changes in a single epoch. That clamped value would be applied to the current capacity to determine the capacity for the following epoch.

The percentile used could be the median (X=50%) or possibly a higher one (say X=70%) to enforce a larger consensus for a block size decrease. Using asymmetric percentiles can help reduce the risk of sudden fee shocks, although it also introduces a tradeoff in the form of potential long-run drift, so the choice of X should be made carefully.

The maximum block size should of course also be fixed (most likely at the current level).

The philosophy of the proposal is that of a slow block size evolution, not of a hyper reactive feedback system. We want the users to consider the network capacity as locally fixed, because that’s what generates the economic scarcity that leads to a security budget (if capacity reacted too quickly to a surge in economic demand, users would factor that into their optimization problem and make lower bids).

The slow evolution also maintains a good level of short term fee predictability that is important for wallets and L2s.

In summary, the idea is that of setting a dynamic baseline capacity that reflects the general stage of adoption of the network, and is not overly affected by temporary events such as the latest memecoin launch.

Analysis

Maximizing the security budget is equivalent to maximizing the miners’ revenues. Therefore it seems natural to leave to the miners (suppliers of block space) the decision as to how much capacity they want to offer to the network, as it happens in most markets. The key is however to make sure that this is not done at the expense of the users.

So how can we guarantee that miners won’t take advantage of this power by endangering the network just to make more money? This must be done by studying their incentives and by carefully designing safeguards so that they won’t act too selfishly.

Let’s first assume that miners play a single round: they observe a demand function and they individually ask themselves what network capacity would help them maximize their revenues. In this simplified case, miners’ incentives seem to be reasonably well aligned with those of the users. In particular:

-

They won’t pick a block size that is too high, because that drives fees to zero and they won’t make any money. This is consistent with the interests of the users, because a network where miners make no money is insecure and ultimately useless.

-

They won’t pick a block size that is too small either, because that would increase the equilibrium fees so much that it would exclude too many people, driving revenues down. This alignment is strongest when demand is sufficiently elastic; if demand were to become highly inelastic at the top end, miners might be tempted by persistent under-capacity, which is why conservative floors, slow adjustments, and a voting system that is robust to downward manipulation are important.

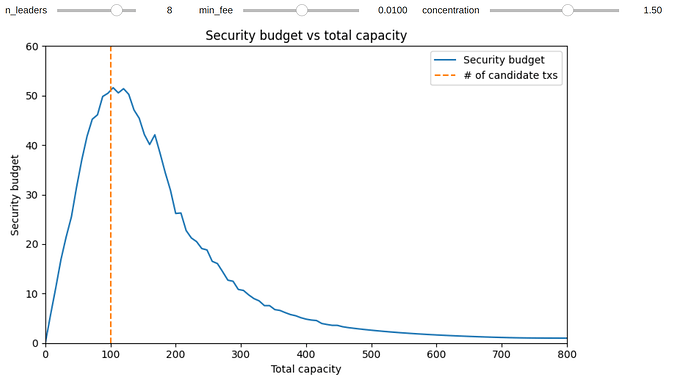

Simulations confirm the intuition that in a one-round game, it is optimal for miners to set a capacity close to the region where fee revenue is maximized, which in the model coincides with serving most economically meaningful transactions.

(See notebook at Google Colab to reproduce the results under different assumptions on the concentration of market demand and number of leaders in the round)

While this is of course a simplification, the result is somewhat reassuring, as it suggests that even in an environment focused purely on short-run revenue, miners still tend to offer substantial total space. That doesn’t imply that every transaction will be included, because some miners will pick up the same transactions, but typically a majority of them will (depending on the concentration of demand).

But of course miners don’t play for only one round, and that has both positive and potentially negative consequences. Positive because the long term involvement is an incentive to care about the network, and to avoid taking decisions that drive away participants or degrade its branding and appeal to potential future users (because that would affect the future profitability of mining). That is also an incentive to proceed with caution when evolving the network capacity, not necessarily optimizing only the one-period revenue, but rather leaning on the safe side of a slightly over-dimensioned capacity that still welcomes experimental use cases and is robust enough to demand fluctuations.

The potentially negative side of the multi-period game is that it opens the door for selfish strategies. Miners are not a monolith and may have different and sometimes opposing interests. While it is fairly clear what their incentives as a group are, it’s not immediately obvious whether they will coordinate to follow them.

For example, can a large miner enforce an excessively high capacity, in an attempt to reduce the overall mining profitability and drive smaller miners away from the competition? That is possible if they control enough hash power to push the chosen percentile in that direction. Conversely, can they shrink the block size to artificially drive fees up in the short term, even if it harms the long term appeal and sustainability of the network? Similarly, that would require controlling enough hash power to dominate the percentile vote.

The choice of X should therefore be based on which attack appears more worrying. If X=50%, the balanced choice, then both attacks would roughly require a majority of hash power. It should be noted that since the maximal capacity delta is also limited, these attacks would require to keep such a majority for a sustained amount of time (several months or years), not just for a couple of periods.

It is important to observe that whatever capacity is voted by the miners, it will hold for everyone. If it produces higher fee revenues, all miners will enjoy them, as proof of work naturally distributes them to everyone (in proportion to their hash power). Therefore if a miner wants to manipulate the capacity away from its optimal value, he will typically suffer losses too, at least in the short term. This does not rule out strategies motivated by relative advantage rather than absolute profit, but delta caps and long adjustment horizons raise the cost of such behavior. Furthermore, since capacity would be capped at the current value, there’s little risk of increases that would affect hardware requirements and the decentralization of the network.

An interesting side effect of this proposal is that if users or merchants are not entirely satisfied with the current state, it gives them a permissionless way to participate by contributing hash power and letting their preferences be reflected, which also has the collateral effect of increasing network security. With its 10 BPS, and soon probably 100 BPS (that is, more than 8.5 million blocks per day), Kaspa provides very frequent opportunities for such participation and signaling.

Another recurrent objection is the following: why having miners vote at all? Can’t we regulate the block size simply based on throughput for example? The problem with that is that throughput is not enough to inform capacity changes in any meaningful way. A naive throughput-based rule would for example increase capacity whenever a bunch of passionate users sets up a swarm of bots to artificially pump up the number of transactions, mistaking that for real market demand (while that’s arguably rather a sign that capacity should be reduced).

A better indicator to use for a heuristic capacity update would be the actual security budget, that is the total amount of fees collected over a given period of time. An algorithm could for example attempt to maximize it using a gradient ascent update of the type: delta_capacity(t) = const * delta_total_fees(t-1) / delta_capacity(t-1).

The problem with such a mechanical approach is that it is completely backward-looking, and cannot take prospective information into account (say, the imminent launch of an L2), nor other intangible factors that affect the medium term health (and therefore profitability) of the network. The second problem is that it can be extremely noisy and slow to converge, especially if it starts very far away from the optimal value in a region that is mostly flat. While such an algorithm can very well be implemented as the default one in a mining software, I believe it’s better not to hard-code it into the protocol, but rather leave the miners free to override it with human knowledge when necessary. This is not because miners are necessarily better forecasters, but because a human-in-the-loop mechanism can incorporate off-chain signals, while conservative caps bound the damage from poor forecasts.

One might also wonder whether miners have any interest in voting a different value than their desired one, to act in a strategic way. This seems unlikely as classic results suggest that when using the median (or any other percentile) and assuming single-peaked preferences (which appears a reasonable approximation here), the dominant strategy for voters is to report the truth. This argument is strongest if preferences remain approximately single-peaked; deviations due to MEV or heterogeneous business models are possible, which again supports slow and bounded adjustments.

Of course it is possible that, especially around the optimal value, the voted capacity may oscillate from one epoch to the other, but that shouldn’t be a problem if maximum delta is sufficiently small.

Another potential concern is related to MEV, since a smaller capacity can generate a higher risk of MEV extraction. This is certainly worth discussing. The high parallelism of the network reduces some MEV opportunities by providing many inclusion paths rather than a single bottleneck, and this property will continue to improve as block rates increase toward 100 BPS. At the same time, tighter capacity can intensify competition for inclusion and ordering. Ongoing efforts to study MEV-reducing techniques by the Core team are therefore highly relevant in this context.

The proposal certainly shifts some power from the users and developers to the miners, which is not entirely without risks. However I believe many worries can be alleviated by a suitable and conservative design that reduces oscillations, by focusing on the incentives, the potential for a more secure and appealing network, and considering the fact that this could motivate additional participants to engage in mining as well, in accordance with the original Satoshi vision.

Relation with alternative solutions

The main proposal that is being discussed to boost the security budget of Kaspa is the LSZ (Lavi/Sattath/Zohar) monopolistic auction system.

While it certainly has merits, it represents a significant departure from the current fee market framework.

My main concern with it is that it eliminates the possibility of price discrimination (ability to pay more for a better quality of service, i.e. faster inclusion), which is a very nice property that Kaspa currently has and is valuable for UX and for extracting revenue and maximizing the security budget under heterogeneous urgency.

Implementation risks

The advantage of adaptive block sizes is that they can be initially implemented in a very conservative way, aggressively limiting the amount of capacity change that miners can vote for in a single epoch and maintaining reasonable floors and ceilings. This would leave the network initially very close to its current state, while giving time for users and miners to adapt to the idea and observe where it leads to, with the possibility to halt it at any time with little harm if any new considerations arise after having observed it in action.